Introducing BLUR: A Benchmark for Tip-of-the-Tongue Search and Reasoning

A Natural Search and Reasoning Task

We’re thrilled to introduce BLUR—a benchmark designed to evaluate how effectively AI agents can help you identify something you vaguely remember but can’t quite name. Think of it like moments when you’re trying to recall the name of a movie you’ve seen—you can picture the details clearly, but the name just won’t come to you. That movie with a rain-drenched neon city where a weary detective tracks down near-human androids, all while wrestling with questions of identity and humanity? Or that park you went to as a child in your hometown? BLUR tests the abilities of AI systems to handle these “tip-of-the-tongue” moments. TL;DR: existing agentic systems don’t do all that great at these queries, hovering around 50% of human performance. Surprisingly, agentic systems also don’t do that much better than their base language models, suggesting many potential areas of improvement!

Before we get to the meat of it—as a sneak peek:

- Perplexity Pro Search scores 0.27.

- o1-2024-12-17 scores 0.49, as does ChatGPT-4o, the system on OpenAI’s platform.

- Operator, OpenAI’s Computer-Using Agent, scores 0.54.

Developed by AI researchers at Patronus AI, BLUR is a carefully curated, high-quality dataset consisting of 573 tip-of-the-tongue question-and-answer pairs. This benchmark serves as a first-line evaluation tool for AI models, systems, and agents tasked with tip-of-the-tongue known-item retrieval. It addresses a challenging yet practical use case, reflecting how users may realistically engage with these systems in practice [Liao and Xiao, 2023], in a problem domain that is common in our daily lives.

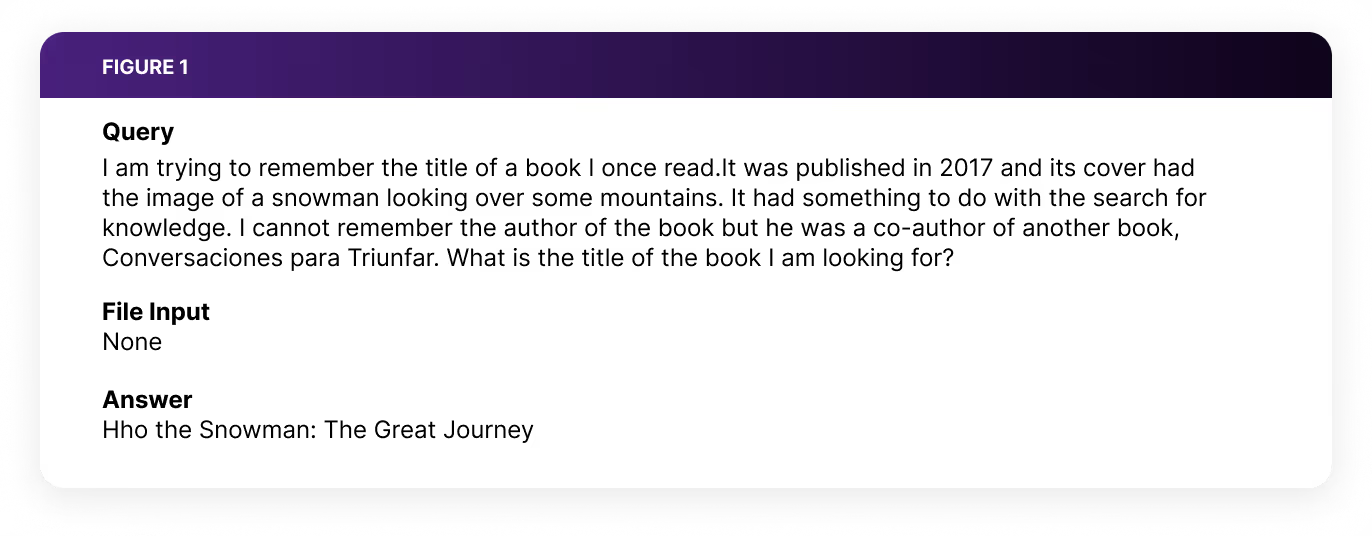

Questions in BLUR span a wide range of domains, including media and entertainment, places, arts and culture, people, experiences, sports, websites, and more. We intentionally avoided limiting the types of information people could use to describe the item they were trying to recall. In fact, 35% of the queries include a file attachment—such as a sketch of a movie character, a hummed tune of a song, or other clues—alongside the text-based query. Additionally, 30% of the queries are multilingual, reflecting the diverse ways people seek to retrieve information.

BLUR prompts are carefully designed to be easy to evaluate and verify for correctness with a simple LLM Judge—answers take the form of short strings. A key consideration in creating this dataset was to ensure answer unambiguity [Mialon et al, 2024]. In other words, could a human—like a friend or colleague—be tasked with finding the item for you and succeed, even if it might take them a few minutes, half an hour, or longer? And would they confidently agree that the provided answer is unambiguously correct [Rein et al, 2024]? Efforts went into crafting questions where there is a single, clear answer. We deliberately avoided cases that could lead to wild goose chases or ambiguity, where multiple potential answers might fit the query. This ensures the dataset remains practical, reliable, and aligned with real-world problem-solving scenarios. In creating this dataset, we deliberately avoided adversarial dataset construction. Such approaches not only obscure the specific abilities benchmarks aim to assess [Bowman and Dahl, 2021] but also compromise ecological validity [De Vries et al, 2020]—the alignment of tasks with real-world scenarios.

To gauge their difficulty, we tasked humans with answering the questions during our validation process, allowing them to freely browse the internet and use any tool at their disposal. The time they took to arrive at an answer served as a natural proxy for question difficulty—an indicator of how hard these queries were for humans to solve. Based on these times, we categorized queries into three levels: easy (under 10 minutes), medium (10–20 minutes), and hard (more than 20 minutes). This classification provides a clear framework for evaluating AI performance against human measures of difficulty.

Easy for Humans, Hard for Existing Models and Agentic Systems to Solve

So, how challenging are these queries? Our analysis shows humans significantly outperform state-of-the-art models, systems, and agents on these tasks.

- Perplexity Pro Search scores 0.27.

- DeepSeek-R1—the language model only—scores 0.41.

- o1-2024-12-17 scores 0.49, as does ChatGPT-4o, the system on OpenAI’s platform.

- Operator, OpenAI’s Computer-Using Agent, scores 0.54.

- HuggingFace Agents, one of the best-performing agent harnesses, achieves the best performance out of all the systems tested, with 0.56 when using Claude 3.5 Sonnet as the underlying language model.

- DynaSaur, an agent that builds and executes its own tools with code, achieves a score of 0.50 of the time when using GPT-4o as the underlying language model.

- Humans, given enough time, fail on these questions only 2% of the time.

Agents Aren’t That Much Better Than Their Base Models

We observed that the best agents and systems performed only marginally better than their base models, indicating that the current methods these models use to leverage available tools are not particularly effective for this task. What surprised us was how well base language models performed on this task, even without agent orchestration. This makes a lot of sense: given their pre-training on vast amounts of internet data, the best models like o1 are particularly effective at matching a person’s vague query—like a description of a movie—with the extensive parametric knowledge these models already contain (which would include countless movie scripts and related information), to effectively surface the correct answer. What supports these conclusions are our observations of how these base models struggled more with queries about places. Descriptions of less-remembered locations are unlikely to appear frequently in internet text; in such cases, effective tool use and agentic orchestration are crucial for better performance at this task.

A qualitative analysis of reasoning logs reveals several areas of failure in current systems capable of tool use.

The first area lies in contextual understanding. Even with multimodal capabilities, systems can misinterpret or overlook critical details. For instance, in searching for a “grey-colored battery pack with a black top,” Operator incorrectly identified a grey battery pack with black buttons as that item—an error that indicates lingering gaps in contextual comprehension and can propagate to downstream answers.

The second area lies in orchestration. Some systems prematurely terminate their search after finding an item that satisfies only part of the specified constraints. In contrast, systems like HuggingFace Agents iteratively verify whether additional constraints can be met before returning a final answer. Perplexity Pro, by comparison, performs a single retrieval-augmented generation step; if the correct answer does not appear in the retrieved documents, the system simply states it cannot answer the query. Their relative performance differences highlight the value of effective orchestration for query attempts and validation in succeeding at this task.

The third area lies in dealing with tool failures. Tools can fail—on occasion, API rate limits or access restrictions (e.g., YouTube sometimes being inaccessible to Operator) can block a system from retrieving key information. Instead of seeking alternate sources, systems were often observed to become stuck in a loop of repeated attempts to access the same site, ultimately failing to retrieve the needed data.

Finally, the fourth area lies in dealing with long contexts. Many queries require aggregating and verifying information from multiple sources, leading to lengthy action sequences. Over time, systems may lose track of the original query or become ensnared in an infinite loop of searches, preventing them from reaching a correct conclusion.

These observations highlight existing areas of improvement for models to improve further at this challenging task.

Benchmark Release

Today, we are releasing a public evaluation set of our questions and answers, along with a public test set (question only), while further retaining a subset of these questions as part of a fully private test set. This private subset ensures the integrity of future evaluations by preventing potential data contamination, a challenge that has undermined the validity of contemporary model and system evaluation [Sainz et al, 2023].

Read the full research paper: https://arxiv.org/abs/2503.19193

Check out the benchmark on HuggingFace: https://huggingface.co/datasets/PatronusAI/BLUR

References

Bowman, Samuel, and George Dahl. "What Will it Take to Fix Benchmarking in Natural Language Understanding?." Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. 2021.

De Vries, Harm, Dzmitry Bahdanau, and Christopher Manning. "Towards ecologically valid research on language user interfaces." arXiv preprint arXiv:2007.14435 (2020).

Liao, Q. Vera, and Ziang Xiao. "Rethinking model evaluation as narrowing the socio-technical gap." arXiv preprint arXiv:2306.03100 (2023).

Mialon, Grégoire, et al. "Gaia: a benchmark for general ai assistants." The Twelfth International Conference on Learning Representations. 2023.

Rein, David, et al. "Gpqa: A graduate-level google-proof q&a benchmark." First Conference on Language Modeling. 2024.

Sainz, Oscar, et al. "NLP Evaluation in trouble: On the Need to Measure LLM Data Contamination for each Benchmark." Findings of the Association for Computational Linguistics: EMNLP 2023. 2023.