Research

Who we are

We are a team of AI researchers and engineers formerly from companies such as Meta AI, Amazon AGI, and Google. Previously, our team has worked on AI research spanning LLM evaluation, fairness, alignment, and embodied agents

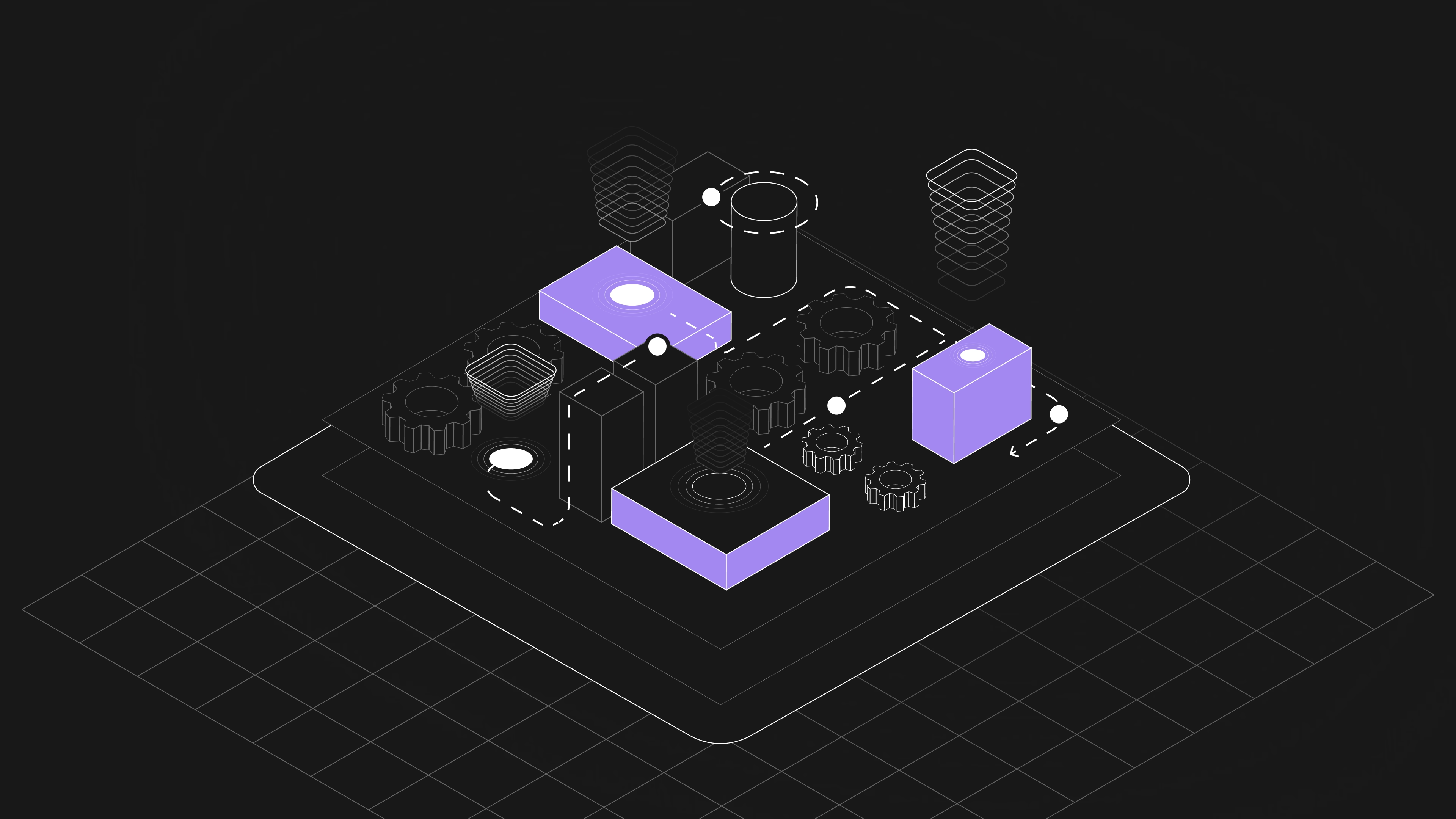

Foundational Research for Foundational AI

Deep Research

Understanding and reasoning over large semantic datasets

Multi-Turn Interaction

Enforcing multi-step workflows and supporting dialogue between the user and the agent

Long-Horizon Tasks

Developing agents to take on real-world tasks on the horizon of weeks, months, and even years

Memory

Increasing agentic memory with context windows and other tooling

Research Work

Research Focus Areas

We develop scalable methods to predict, interpret, and steer AI agent behavior, researching the alignment and explainability methods needed to keep increasingly capable systems aligned.

We study how agents think, negotiate, and remember, tackling failure modes like sycophancy and cognitive dissonance while advancing the memory and reward architectures that form the core of robust embodied intelligence.

We pioneer training paradigms that move beyond conventional tool-dependent RL, using evolutionary methods, automated environment design, and world simulation to build agents that learn efficiently and generalize broadly.

Latest Updates

Concrete benchmarks, environments, and methodologies built directly from our research